Filter

Associated Lab

- Aguilera Castrejon Lab (19) Apply Aguilera Castrejon Lab filter

- Ahrens Lab (73) Apply Ahrens Lab filter

- Aso Lab (42) Apply Aso Lab filter

- Baker Lab (38) Apply Baker Lab filter

- Betzig Lab (116) Apply Betzig Lab filter

- Beyene Lab (15) Apply Beyene Lab filter

- Bock Lab (17) Apply Bock Lab filter

- Branson Lab (55) Apply Branson Lab filter

- Card Lab (43) Apply Card Lab filter

- Cardona Lab (64) Apply Cardona Lab filter

- Chklovskii Lab (13) Apply Chklovskii Lab filter

- Clapham Lab (16) Apply Clapham Lab filter

- Cui Lab (19) Apply Cui Lab filter

- Darshan Lab (12) Apply Darshan Lab filter

- Dennis Lab (3) Apply Dennis Lab filter

- Dickson Lab (46) Apply Dickson Lab filter

- Druckmann Lab (25) Apply Druckmann Lab filter

- Dudman Lab (56) Apply Dudman Lab filter

- Eddy/Rivas Lab (30) Apply Eddy/Rivas Lab filter

- Egnor Lab (11) Apply Egnor Lab filter

- Espinosa Medina Lab (23) Apply Espinosa Medina Lab filter

- Feliciano Lab (12) Apply Feliciano Lab filter

- Fetter Lab (41) Apply Fetter Lab filter

- FIB-SEM Technology (1) Apply FIB-SEM Technology filter

- Fitzgerald Lab (30) Apply Fitzgerald Lab filter

- Freeman Lab (15) Apply Freeman Lab filter

- Funke Lab (46) Apply Funke Lab filter

- Gonen Lab (91) Apply Gonen Lab filter

- Grigorieff Lab (62) Apply Grigorieff Lab filter

- Harris Lab (65) Apply Harris Lab filter

- Heberlein Lab (94) Apply Heberlein Lab filter

- Hermundstad Lab (30) Apply Hermundstad Lab filter

- Hess Lab (80) Apply Hess Lab filter

- Ilanges Lab (4) Apply Ilanges Lab filter

- Jayaraman Lab (48) Apply Jayaraman Lab filter

- Ji Lab (33) Apply Ji Lab filter

- Johnson Lab (6) Apply Johnson Lab filter

- Kainmueller Lab (19) Apply Kainmueller Lab filter

- Karpova Lab (15) Apply Karpova Lab filter

- Keleman Lab (13) Apply Keleman Lab filter

- Keller Lab (76) Apply Keller Lab filter

- Koay Lab (20) Apply Koay Lab filter

- Lavis Lab (158) Apply Lavis Lab filter

- Lee (Albert) Lab (34) Apply Lee (Albert) Lab filter

- Leonardo Lab (23) Apply Leonardo Lab filter

- Li Lab (32) Apply Li Lab filter

- Lippincott-Schwartz Lab (180) Apply Lippincott-Schwartz Lab filter

- Liu (Yin) Lab (8) Apply Liu (Yin) Lab filter

- Liu (Zhe) Lab (65) Apply Liu (Zhe) Lab filter

- Looger Lab (138) Apply Looger Lab filter

- Magee Lab (49) Apply Magee Lab filter

- Menon Lab (18) Apply Menon Lab filter

- Murphy Lab (13) Apply Murphy Lab filter

- O'Shea Lab (8) Apply O'Shea Lab filter

- Otopalik Lab (13) Apply Otopalik Lab filter

- Pachitariu Lab (54) Apply Pachitariu Lab filter

- Pastalkova Lab (19) Apply Pastalkova Lab filter

- Pavlopoulos Lab (19) Apply Pavlopoulos Lab filter

- Pedram Lab (15) Apply Pedram Lab filter

- Podgorski Lab (16) Apply Podgorski Lab filter

- Reiser Lab (55) Apply Reiser Lab filter

- Riddiford Lab (44) Apply Riddiford Lab filter

- Romani Lab (51) Apply Romani Lab filter

- Rubin Lab (149) Apply Rubin Lab filter

- Saalfeld Lab (64) Apply Saalfeld Lab filter

- Satou Lab (18) Apply Satou Lab filter

- Scheffer Lab (38) Apply Scheffer Lab filter

- Schreiter Lab (70) Apply Schreiter Lab filter

- Schulze Lab (1) Apply Schulze Lab filter

- Sgro Lab (23) Apply Sgro Lab filter

- Shroff Lab (31) Apply Shroff Lab filter

- Simpson Lab (23) Apply Simpson Lab filter

- Singer Lab (80) Apply Singer Lab filter

- Spruston Lab (98) Apply Spruston Lab filter

- Stern Lab (160) Apply Stern Lab filter

- Sternson Lab (54) Apply Sternson Lab filter

- Stringer Lab (43) Apply Stringer Lab filter

- Svoboda Lab (136) Apply Svoboda Lab filter

- Tebo Lab (35) Apply Tebo Lab filter

- Tervo Lab (10) Apply Tervo Lab filter

- Tillberg Lab (22) Apply Tillberg Lab filter

- Tjian Lab (64) Apply Tjian Lab filter

- Truman Lab (88) Apply Truman Lab filter

- Turaga Lab (53) Apply Turaga Lab filter

- Turner Lab (38) Apply Turner Lab filter

- Vale Lab (8) Apply Vale Lab filter

- Voigts Lab (4) Apply Voigts Lab filter

- Wang (Meng) Lab (29) Apply Wang (Meng) Lab filter

- Wang (Shaohe) Lab (25) Apply Wang (Shaohe) Lab filter

- Wong-Campos Lab (1) Apply Wong-Campos Lab filter

- Wu Lab (9) Apply Wu Lab filter

- Zlatic Lab (28) Apply Zlatic Lab filter

- Zuker Lab (25) Apply Zuker Lab filter

Associated Project Team

- CellMap (13) Apply CellMap filter

- COSEM (3) Apply COSEM filter

- FIB-SEM Technology (5) Apply FIB-SEM Technology filter

- Fly Descending Interneuron (12) Apply Fly Descending Interneuron filter

- Fly Functional Connectome (14) Apply Fly Functional Connectome filter

- Fly Olympiad (5) Apply Fly Olympiad filter

- FlyEM (56) Apply FlyEM filter

- FlyLight (50) Apply FlyLight filter

- GENIE (47) Apply GENIE filter

- Integrative Imaging (9) Apply Integrative Imaging filter

- Larval Olympiad (2) Apply Larval Olympiad filter

- MouseLight (18) Apply MouseLight filter

- NeuroSeq (1) Apply NeuroSeq filter

- ThalamoSeq (1) Apply ThalamoSeq filter

- Tool Translation Team (T3) (29) Apply Tool Translation Team (T3) filter

- Transcription Imaging (49) Apply Transcription Imaging filter

Publication Date

- 2026 (74) Apply 2026 filter

- 2025 (222) Apply 2025 filter

- 2024 (210) Apply 2024 filter

- 2023 (158) Apply 2023 filter

- 2022 (192) Apply 2022 filter

- 2021 (194) Apply 2021 filter

- 2020 (196) Apply 2020 filter

- 2019 (202) Apply 2019 filter

- 2018 (232) Apply 2018 filter

- 2017 (217) Apply 2017 filter

- 2016 (209) Apply 2016 filter

- 2015 (252) Apply 2015 filter

- 2014 (236) Apply 2014 filter

- 2013 (194) Apply 2013 filter

- 2012 (190) Apply 2012 filter

- 2011 (190) Apply 2011 filter

- 2010 (161) Apply 2010 filter

- 2009 (158) Apply 2009 filter

- 2008 (140) Apply 2008 filter

- 2007 (106) Apply 2007 filter

- 2006 (92) Apply 2006 filter

- 2005 (67) Apply 2005 filter

- 2004 (57) Apply 2004 filter

- 2003 (58) Apply 2003 filter

- 2002 (39) Apply 2002 filter

- 2001 (28) Apply 2001 filter

- 2000 (29) Apply 2000 filter

- 1999 (14) Apply 1999 filter

- 1998 (18) Apply 1998 filter

- 1997 (16) Apply 1997 filter

- 1996 (10) Apply 1996 filter

- 1995 (18) Apply 1995 filter

- 1994 (12) Apply 1994 filter

- 1993 (10) Apply 1993 filter

- 1992 (6) Apply 1992 filter

- 1991 (11) Apply 1991 filter

- 1990 (11) Apply 1990 filter

- 1989 (6) Apply 1989 filter

- 1988 (1) Apply 1988 filter

- 1987 (7) Apply 1987 filter

- 1986 (4) Apply 1986 filter

- 1985 (5) Apply 1985 filter

- 1984 (2) Apply 1984 filter

- 1983 (2) Apply 1983 filter

- 1982 (3) Apply 1982 filter

- 1981 (3) Apply 1981 filter

- 1980 (1) Apply 1980 filter

- 1979 (1) Apply 1979 filter

- 1976 (2) Apply 1976 filter

- 1973 (1) Apply 1973 filter

- 1970 (1) Apply 1970 filter

- 1967 (1) Apply 1967 filter

Type of Publication

4269 Publications

Showing 1241-1250 of 4269 resultsThe human CRSP-Med coactivator complex is targeted by a diverse array of sequence-specific regulatory proteins. Using EM and single-particle reconstruction techniques, we recently completed a structural analysis of CRSP-Med bound to VP16 and SREBP-1a. Notably, these activators induced distinct conformational states upon binding the coactivator. Ostensibly, these different conformational states result from VP16 and SREBP-1a targeting distinct subunits in the CRSP-Med complex. To test this, we conducted a structural analysis of CRSP-Med bound to either thyroid hormone receptor (TR) or vitamin D receptor (VDR), both of which interact with the same subunit (Med220) of CRSP-Med. Structural comparison of TR- and VDR-bound complexes (at a resolution of 29 A) indeed reveals a shared conformational feature that is distinct from other known CRSP- Med structures. Importantly, this nuclear receptor-induced structural shift seems largely dependent on the movement of Med220 within the complex.

Activity in the motor cortex predicts movements, seconds before they are initiated. This preparatory activity has been observed across cortical layers, including in descending pyramidal tract neurons in layer 5. A key question is how preparatory activity is maintained without causing movement, and is ultimately converted to a motor command to trigger appropriate movements. Here, using single-cell transcriptional profiling and axonal reconstructions, we identify two types of pyramidal tract neuron. Both types project to several targets in the basal ganglia and brainstem. One type projects to thalamic regions that connect back to motor cortex; populations of these neurons produced early preparatory activity that persisted until the movement was initiated. The second type projects to motor centres in the medulla and mainly produced late preparatory activity and motor commands. These results indicate that two types of motor cortex output neurons have specialized roles in motor control.

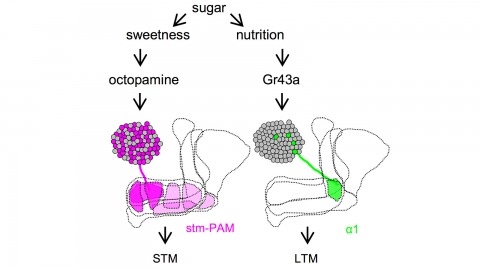

Drosophila melanogaster can acquire a stable appetitive olfactory memory when the presentation of a sugar reward and an odor are paired. However, the neuronal mechanisms by which a single training induces long-term memory are poorly understood. Here we show that two distinct subsets of dopamine neurons in the fly brain signal reward for short-term (STM) and long-term memories (LTM). One subset induces memory that decays within several hours, whereas the other induces memory that gradually develops after training. They convey reward signals to spatially segregated synaptic domains of the mushroom body (MB), a potential site for convergence. Furthermore, we identified a single type of dopamine neuron that conveys the reward signal to restricted subdomains of the mushroom body lobes and induces long-term memory. Constant appetitive memory retention after a single training session thus comprises two memory components triggered by distinct dopamine neurons.

Ionotropic glutamate receptors principally mediate fast excitatory transmission in the brain. Among the three classes of ionotropic glutamate receptors, kainate receptors (KARs) have a unique brain distribution, which has been historically defined by (3)H-radiolabeled kainate binding. Compared with recombinant KARs expressed in heterologous cells, synaptic KARs exhibit characteristically slow rise-time and decay kinetics. However, the mechanisms responsible for these distinct KAR properties remain unclear. We found that both the high-affinity binding pattern in the mouse brain and the channel properties of native KARs are determined by the KAR auxiliary subunit Neto1. Through modulation of agonist binding affinity and off-kinetics of KARs, but not trafficking of KARs, Neto1 determined both the KAR high-affinity binding pattern and the distinctively slow kinetics of postsynaptic KARs. By regulating KAR excitatory postsynaptic current kinetics, Neto1 can control synaptic temporal summation, spike generation and fidelity.

Pigmentation divergence between Drosophila species has emerged as a model trait for studying the genetic basis of phenotypic evolution, with genetic changes contributing to pigmentation differences often mapping to genes in the pigment synthesis pathway and their regulators. These studies of Drosophila pigmentation have tended to focus on pigmentation changes in one body part for a particular pair of species, but changes in pigmentation are often observed in multiple body parts between the same pair of species. The similarities and differences of genetic changes responsible for divergent pigmentation in different body parts of the same species thus remain largely unknown. Here we compare the genetic basis of pigmentation divergence between Drosophila elegans and D. gunungcola in the wing, legs, and thorax. Prior work has shown that regions of the genome containing the pigmentation genes yellow and ebony influence the size of divergent male-specific wing spots between these two species. We find that these same two regions of the genome underlie differences in leg and thorax pigmentation; however, divergent alleles in these regions show differences in allelic dominance and epistasis among the three body parts. These complex patterns of inheritance can be explained by a model of evolution involving tissue-specific changes in the expression of Yellow and Ebony between D. elegans and D. gunungcola.

BRCA2 is an essential tumor suppressor protein involved in promoting faithful repair of DNA lesions. The activity of BRCA2 needs to be tuned precisely to be active when and where it is needed. Here, we quantified the spatio-temporal dynamics of BRCA2 in living cells using aberration-corrected multifocal microscopy (acMFM). Using multicolor imaging to identify DNA damage sites, we were able to quantify its dynamic motion patterns in the nucleus and at DNA damage sites. While a large fraction of BRCA2 molecules localized near DNA damage sites appear immobile, an additional fraction of molecules exhibits subdiffusive motion, providing a potential mechanism to retain an increased number of molecules at DNA lesions. Super-resolution microscopy revealed inhomogeneous localization of BRCA2 relative to other DNA repair factors at sites of DNA damage. This suggests the presence of multiple nanoscale compartments in the chromatin surrounding the DNA lesion, which could play an important role in the contribution of BRCA2 to the regulation of the repair process.

The microtubule cytoskeleton has proven to be an effective target for cancer therapeutics. One class of drugs, known as microtubule stabilizing agents (MSAs), binds to microtubule polymers and stabilizes them against depolymerization. The prototype of this group of drugs, Taxol, is an effective chemotherapeutic agent used extensively in the treatment of human ovarian, breast, and lung carcinomas. Although electron crystallography and photoaffinity labeling experiments determined that the binding site for Taxol is in a hydrophobic pocket in beta-tubulin, little was known about the effects of this drug on the conformation of the entire microtubule. A recent study from our laboratory utilizing hydrogen-deuterium exchange (HDX) in concert with various mass spectrometry (MS) techniques has provided new information on the structure of microtubules upon Taxol binding. In the current study we apply this technique to determine the binding mode and the conformational effects on chicken erythrocyte tubulin (CET) of another MSA, discodermolide, whose synthetic analogues may have potential use in the clinic. We confirmed that, like Taxol, discodermolide binds to the taxane binding pocket in beta-tubulin. However, as opposed to Taxol, which has major interactions with the M-loop, discodermolide orients itself away from this loop and toward the N-terminal H1-S2 loop. Additionally, discodermolide stabilizes microtubules mainly via its effects on interdimer contacts, specifically on the alpha-tubulin side, and to a lesser extent on interprotofilament contacts between adjacent beta-tubulin subunits. Also, our results indicate complementary stabilizing effects of Taxol and discodermolide on the microtubules, which may explain the synergy observed between the two drugs in vivo.

The orthogonal array of axon pathways in the Drosophila CNS is constructed in part under the control of three Robo family axon guidance receptors: Robo1, Robo2 and Robo3. Each of these receptors is responsible for a distinct set of guidance decisions. To determine the molecular basis for these functional specializations, we used homologous recombination to create a series of 9 "robo swap" alleles: expressing each of the three Robo receptors from each of the three robo loci. We demonstrate that the lateral positioning of longitudinal axon pathways relies primarily on differences in gene regulation, not distinct combinations of Robo proteins as previously thought. In contrast, specific features of the Robo1 and Robo2 proteins contribute to their distinct functions in commissure formation. These specializations allow Robo1 to prevent crossing and Robo2 to promote crossing. These data demonstrate how diversification of expression and structure within a single family of guidance receptors can shape complex patterns of neuronal wiring.

The superficial superior colliculus (sSC) occupies a critical node in the mammalian visual system; it is one of two major retinorecipient areas, receives visual cortical input, and innervates visual thalamocortical circuits. Nonetheless, the contribution of sSC neurons to downstream neural activity and visually guided behavior is unknown and frequently neglected. Here we identified the visual stimuli to which specific classes of sSC neurons respond, the downstream regions they target, and transgenic mice enabling class-specific manipulations. One class responds to small, slowly moving stimuli and projects exclusively to lateral posterior thalamus; another, comprising GABAergic neurons, responds to the sudden appearance or rapid movement of large stimuli and projects to multiple areas, including the lateral geniculate nucleus. A third class exhibits direction-selective responses and targets deeper SC layers. Together, our results show how specific sSC neurons represent and distribute diverse information and enable direct tests of their functional role.

Although anatomical, lesion, and imaging studies of the hippocampus indicate qualitatively different information processing along its septo-temporal axis, physiological mechanisms supporting such distinction are missing. We found fundamental differences between the dorsal (dCA3) and the ventral-most parts (vCA3) of the hippocampus in both environmental representation and temporal dynamics. Discrete place fields of dCA3 neurons evenly covered all parts of the testing environments. In contrast, vCA3 neurons (1) rarely showed continuous two-dimensional place fields, (2) differentiated open and closed arms of a radial maze, and (3) discharged similar firing patterns with respect to the goals, both on multiple arms of a radial maze and during opposite journeys in a zigzag maze. In addition, theta power and the fraction of theta-rhythmic neurons were substantially reduced in the ventral compared with dorsal hippocampus. We hypothesize that the spatial representation in the septo-temporal axis of the hippocampus is progressively decreased. This change is paralleled with a reduction of theta rhythm and an increased representation of nonspatial information.