Filter

Associated Lab

- Aguilera Castrejon Lab (4) Apply Aguilera Castrejon Lab filter

- Ahrens Lab (61) Apply Ahrens Lab filter

- Aso Lab (42) Apply Aso Lab filter

- Baker Lab (19) Apply Baker Lab filter

- Betzig Lab (104) Apply Betzig Lab filter

- Beyene Lab (10) Apply Beyene Lab filter

- Bock Lab (14) Apply Bock Lab filter

- Branson Lab (51) Apply Branson Lab filter

- Card Lab (37) Apply Card Lab filter

- Cardona Lab (45) Apply Cardona Lab filter

- Chklovskii Lab (10) Apply Chklovskii Lab filter

- Clapham Lab (15) Apply Clapham Lab filter

- Cui Lab (19) Apply Cui Lab filter

- Darshan Lab (8) Apply Darshan Lab filter

- Dennis Lab (2) Apply Dennis Lab filter

- Dickson Lab (32) Apply Dickson Lab filter

- Druckmann Lab (21) Apply Druckmann Lab filter

- Dudman Lab (43) Apply Dudman Lab filter

- Eddy/Rivas Lab (30) Apply Eddy/Rivas Lab filter

- Egnor Lab (4) Apply Egnor Lab filter

- Espinosa Medina Lab (19) Apply Espinosa Medina Lab filter

- Feliciano Lab (12) Apply Feliciano Lab filter

- Fetter Lab (31) Apply Fetter Lab filter

- FIB-SEM Technology (1) Apply FIB-SEM Technology filter

- Fitzgerald Lab (17) Apply Fitzgerald Lab filter

- Freeman Lab (15) Apply Freeman Lab filter

- Funke Lab (46) Apply Funke Lab filter

- Gonen Lab (59) Apply Gonen Lab filter

- Grigorieff Lab (34) Apply Grigorieff Lab filter

- Harris Lab (55) Apply Harris Lab filter

- Heberlein Lab (13) Apply Heberlein Lab filter

- Hermundstad Lab (26) Apply Hermundstad Lab filter

- Hess Lab (77) Apply Hess Lab filter

- Ilanges Lab (3) Apply Ilanges Lab filter

- Jayaraman Lab (44) Apply Jayaraman Lab filter

- Ji Lab (33) Apply Ji Lab filter

- Johnson Lab (1) Apply Johnson Lab filter

- Karpova Lab (13) Apply Karpova Lab filter

- Keleman Lab (8) Apply Keleman Lab filter

- Keller Lab (61) Apply Keller Lab filter

- Koay Lab (3) Apply Koay Lab filter

- Lavis Lab (146) Apply Lavis Lab filter

- Lee (Albert) Lab (29) Apply Lee (Albert) Lab filter

- Leonardo Lab (19) Apply Leonardo Lab filter

- Li Lab (7) Apply Li Lab filter

- Lippincott-Schwartz Lab (108) Apply Lippincott-Schwartz Lab filter

- Liu (Yin) Lab (3) Apply Liu (Yin) Lab filter

- Liu (Zhe) Lab (60) Apply Liu (Zhe) Lab filter

- Looger Lab (137) Apply Looger Lab filter

- Magee Lab (31) Apply Magee Lab filter

- Menon Lab (12) Apply Menon Lab filter

- Murphy Lab (6) Apply Murphy Lab filter

- O'Shea Lab (7) Apply O'Shea Lab filter

- Otopalik Lab (1) Apply Otopalik Lab filter

- Pachitariu Lab (40) Apply Pachitariu Lab filter

- Pastalkova Lab (6) Apply Pastalkova Lab filter

- Pavlopoulos Lab (7) Apply Pavlopoulos Lab filter

- Pedram Lab (4) Apply Pedram Lab filter

- Podgorski Lab (16) Apply Podgorski Lab filter

- Reiser Lab (49) Apply Reiser Lab filter

- Riddiford Lab (20) Apply Riddiford Lab filter

- Romani Lab (39) Apply Romani Lab filter

- Rubin Lab (111) Apply Rubin Lab filter

- Saalfeld Lab (47) Apply Saalfeld Lab filter

- Satou Lab (3) Apply Satou Lab filter

- Scheffer Lab (38) Apply Scheffer Lab filter

- Schreiter Lab (53) Apply Schreiter Lab filter

- Schulze Lab (1) Apply Schulze Lab filter

- Sgro Lab (3) Apply Sgro Lab filter

- Shroff Lab (31) Apply Shroff Lab filter

- Simpson Lab (18) Apply Simpson Lab filter

- Singer Lab (37) Apply Singer Lab filter

- Spruston Lab (62) Apply Spruston Lab filter

- Stern Lab (77) Apply Stern Lab filter

- Sternson Lab (47) Apply Sternson Lab filter

- Stringer Lab (38) Apply Stringer Lab filter

- Svoboda Lab (132) Apply Svoboda Lab filter

- Tebo Lab (11) Apply Tebo Lab filter

- Tervo Lab (9) Apply Tervo Lab filter

- Tillberg Lab (19) Apply Tillberg Lab filter

- Tjian Lab (17) Apply Tjian Lab filter

- Truman Lab (58) Apply Truman Lab filter

- Turaga Lab (41) Apply Turaga Lab filter

- Turner Lab (27) Apply Turner Lab filter

- Vale Lab (8) Apply Vale Lab filter

- Voigts Lab (4) Apply Voigts Lab filter

- Wang (Meng) Lab (28) Apply Wang (Meng) Lab filter

- Wang (Shaohe) Lab (6) Apply Wang (Shaohe) Lab filter

- Wong-Campos Lab (1) Apply Wong-Campos Lab filter

- Wu Lab (8) Apply Wu Lab filter

- Zlatic Lab (26) Apply Zlatic Lab filter

- Zuker Lab (5) Apply Zuker Lab filter

Associated Project Team

- CellMap (13) Apply CellMap filter

- COSEM (3) Apply COSEM filter

- FIB-SEM Technology (5) Apply FIB-SEM Technology filter

- Fly Descending Interneuron (12) Apply Fly Descending Interneuron filter

- Fly Functional Connectome (14) Apply Fly Functional Connectome filter

- Fly Olympiad (5) Apply Fly Olympiad filter

- FlyEM (56) Apply FlyEM filter

- FlyLight (50) Apply FlyLight filter

- GENIE (47) Apply GENIE filter

- Integrative Imaging (9) Apply Integrative Imaging filter

- Larval Olympiad (2) Apply Larval Olympiad filter

- MouseLight (18) Apply MouseLight filter

- NeuroSeq (1) Apply NeuroSeq filter

- ThalamoSeq (1) Apply ThalamoSeq filter

- Tool Translation Team (T3) (29) Apply Tool Translation Team (T3) filter

- Transcription Imaging (45) Apply Transcription Imaging filter

Associated Support Team

- Project Pipeline Support (5) Apply Project Pipeline Support filter

- Anatomy and Histology (18) Apply Anatomy and Histology filter

- Cryo-Electron Microscopy (43) Apply Cryo-Electron Microscopy filter

- Electron Microscopy (18) Apply Electron Microscopy filter

- Gene Targeting and Transgenics (11) Apply Gene Targeting and Transgenics filter

- High Performance Computing (7) Apply High Performance Computing filter

- Integrative Imaging (21) Apply Integrative Imaging filter

- Invertebrate Shared Resource (40) Apply Invertebrate Shared Resource filter

- Janelia Experimental Technology (37) Apply Janelia Experimental Technology filter

- Management Team (1) Apply Management Team filter

- Mass Spectrometry (1) Apply Mass Spectrometry filter

- Molecular Genomics (15) Apply Molecular Genomics filter

- Project Technical Resources (54) Apply Project Technical Resources filter

- Quantitative Genomics (20) Apply Quantitative Genomics filter

- Scientific Computing (103) Apply Scientific Computing filter

- Stem Cell & Primary Culture (14) Apply Stem Cell & Primary Culture filter

- Viral Tools (14) Apply Viral Tools filter

- Vivarium (7) Apply Vivarium filter

Publication Date

- 2026 (59) Apply 2026 filter

- 2025 (222) Apply 2025 filter

- 2024 (210) Apply 2024 filter

- 2023 (157) Apply 2023 filter

- 2022 (166) Apply 2022 filter

- 2021 (175) Apply 2021 filter

- 2020 (177) Apply 2020 filter

- 2019 (177) Apply 2019 filter

- 2018 (206) Apply 2018 filter

- 2017 (186) Apply 2017 filter

- 2016 (191) Apply 2016 filter

- 2015 (195) Apply 2015 filter

- 2014 (190) Apply 2014 filter

- 2013 (136) Apply 2013 filter

- 2012 (112) Apply 2012 filter

- 2011 (98) Apply 2011 filter

- 2010 (61) Apply 2010 filter

- 2009 (56) Apply 2009 filter

- 2008 (40) Apply 2008 filter

- 2007 (21) Apply 2007 filter

- 2006 (3) Apply 2006 filter

2838 Janelia Publications

Showing 371-380 of 2838 resultsThe ability of fluorescence microscopy to simultaneously image multiple specific molecules of interest has allowed biologists to infer macromolecular organization and colocalization in fixed and live samples. However, a number of factors could affect these analyses, and colocalization is a misnomer. We propose that image similarity coefficient as a better and more descriptive term. In this chapter we will discuss many of the factors involved with determining image similarity including our perception of color in images. In addition, the correct use of several commonly accepted methods such as Pearson’s correlation coefficient, Manders’ overlap coefficient, and Spearman’s ranked correlation coefficient is discussed.

Chromosome inversions are of unique importance in the evolution of genomes and species because when heterozygous with a standard arrangement chromosome, they suppress meiotic crossovers within the inversion. In Drosophila species, heterozygous inversions also cause the interchromosomal effect, whereby the presence of a heterozygous inversion induces a dramatic increase in crossover frequencies in the remainder of the genome within a single meiosis. To date, the interchromosomal effect has been studied exclusively in species that also have high frequencies of inversions in wild populations. We took advantage of a recently developed approach for generating inversions in Drosophila simulans, a species that does not have inversions in wild populations, to ask if there is an interchromosomal effect. We used the existing chromosome 3R balancer and generated a new chromosome 2L balancer to assay for the interchromosomal effect genetically and cytologically. We found no evidence of an interchromosomal effect in D. simulans. To gain insight into the underlying mechanistic reasons, we qualitatively analyzed the relationship between meiotic double-strand break formation and synaptonemal complex assembly. We find that the synaptonemal complex is assembled prior to double-strand break formation as in D. melanogaster; however, we show that the synaptonemal complex is assembled prior to localization of the oocyte determination factor Orb, whereas in D. melanogaster, synaptonemal complex formation does not begin until Orb is localized. Together, our data show heterozygous inversions in D. simulans do not induce an interchromosomal effect and that there are differences in the developmental programming of the early stages of meiosis.

Phosphatidylinositol (PI) is an inositol-containing phospholipid synthesized in the endoplasmic reticulum (ER). PI is a precursor lipid for PI 4,5-bisphosphate (PI(4,5)P) in the plasma membrane (PM) important for Ca signaling in response to extracellular stimuli. Thus, ER-to-PM PI transfer becomes essential for cells to maintain PI(4,5)P homeostasis during receptor stimulation. In this chapter, we discuss two live-cell imaging protocols to analyze ER-to-PM PI transfer at ER-PM junctions, where the two membrane compartments make close appositions accommodating PI transfer. First, we describe how to monitor PI(4,5)P replenishment following receptor stimulation, as a readout of PI transfer, using a PI(4,5)P biosensor and total internal reflection fluorescence microscopy. The second protocol directly visualizes PI transfer proteins that accumulate at ER-PM junctions and mediate PI(4,5)P replenishment with PI in the ER in stimulated cells. These methods provide spatial and temporal analysis of ER-to-PM PI transfer during receptor stimulation and can be adapted to other research questions related to this topic.

New reconstruction techniques are generating connectomes of unprecedented size. These must be analyzed to generate human comprehensible results. The analyses being used fall into three general categories. The first is interactive tools used during reconstruction, to help guide the effort, look for possible errors, identify potential cell classes, and answer other preliminary questions. The second type of analysis is support for formal documents such as papers and theses. Scientific norms here require that the data be archived and accessible, and the analysis reproducible. In contrast to some other "omic" fields such as genomics, where a few specific analyses dominate usage, connectomics is rapidly evolving and the analyses used are often specific to the connectome being analyzed. These analyses are typically performed in a variety of conventional programming language, such as Matlab, R, Python, or C++, and read the connectomic data either from a file or through database queries, neither of which are standardized. In the short term we see no alternative to the use of specific analyses, so the best that can be done is to publish the analysis code, and the interface by which it reads connectomic data. A similar situation exists for archiving connectome data. Each group independently makes their data available, but there is no standardized format and long-term accessibility is neither enforced nor funded. In the long term, as connectomics becomes more common, a natural evolution would be a central facility for storing and querying connectomic data, playing a role similar to the National Center for Biotechnology Information for genomes. The final form of analysis is the import of connectome data into downstream tools such as neural simulation or machine learning. In this process, there are two main problems that need to be addressed. First, the reconstructed circuits contain huge amounts of detail, which must be intelligently reduced to a form the downstream tools can use. Second, much of the data needed for these downstream operations must be obtained by other methods (such as genetic or optical) and must be merged with the extracted connectome.

Automatic image segmentation is critical to scale up electron microscope (EM) connectome reconstruction. To this end, segmentation competitions, such as CREMI and SNEMI, exist to help researchers evaluate segmentation algorithms with the goal of improving them. Because generating ground truth is time-consuming, these competitions often fail to capture the challenges in segmenting larger datasets required in connectomics. More generally, the common metrics for EM image segmentation do not emphasize impact on downstream analysis and are often not very useful for isolating problem areas in the segmentation. For example, they do not capture connectivity information and often over-rate the quality of a segmentation as we demonstrate later. To address these issues, we introduce a novel strategy to enable evaluation of segmentation at large scales both in a supervised setting, where ground truth is available, or an unsupervised setting. To achieve this, we first introduce new metrics more closely aligned with the use of segmentation in downstream analysis and reconstruction. In particular, these include synapse connectivity and completeness metrics that provide both meaningful and intuitive interpretations of segmentation quality as it relates to the preservation of neuron connectivity. Also, we propose measures of segmentation correctness and completeness with respect to the percentage of "orphan" fragments and the concentrations of self-loops formed by segmentation failures, which are helpful in analysis and can be computed without ground truth. The introduction of new metrics intended to be used for practical applications involving large datasets necessitates a scalable software ecosystem, which is a critical contribution of this paper. To this end, we introduce a scalable, flexible software framework that enables integration of several different metrics and provides mechanisms to evaluate and debug differences between segmentations. We also introduce visualization software to help users to consume the various metrics collected. We evaluate our framework on two relatively large public groundtruth datasets providing novel insights on example segmentations.

Artificial activation of anatomically localized, genetically defined hypothalamic neuron populations is known to trigger distinct innate behaviors, suggesting a hypothalamic nucleus-centered organization of behavior control. To assess whether the encoding of behavior is similarly anatomically confined, we performed simultaneous neuron recordings across twenty hypothalamic regions in freely moving animals. Here we show that distinct but anatomically distributed neuron ensembles encode the social and fear behavior classes, primarily through mixed selectivity. While behavior class-encoding ensembles were spatially distributed, individual ensembles exhibited strong localization bias. Encoding models identified that behavior actions, but not motion-related variables, explained a large fraction of hypothalamic neuron activity variance. These results identify unexpected complexity in the hypothalamic encoding of instincts and provide a foundation for understanding the role of distributed neural representations in the expression of behaviors driven by hardwired circuits.

Intracellular recording allows precise measurement and manipulation of individual neurons, but it requires stable mechanical contact between the electrode and the cell membrane, and thus it has remained challenging to perform in behaving animals. Whole-cell recordings in freely moving animals can be obtained by rigidly fixing ('anchoring') the pipette electrode to the head; however, previous anchoring procedures were slow and often caused substantial pipette movement, resulting in loss of the recording or of recording quality. We describe a UV-transparent collar and UV-cured adhesive technique that rapidly (within 15 s) anchors pipettes in place with virtually no movement, thus substantially improving the reliability, yield and quality of freely moving whole-cell recordings. Recordings are first obtained from anesthetized or awake head-fixed rats. UV light cures the thin adhesive layers linking pipette to collar to head. Then, the animals are rapidly and smoothly released for recording during unrestrained behavior. The anesthetized-patched version can be completed in ∼4-7 h (excluding histology) and the awake-patched version requires ∼1-4 h per day for ∼2 weeks. These advances should greatly facilitate studies of neuronal integration and plasticity in identified cells during natural behaviors.

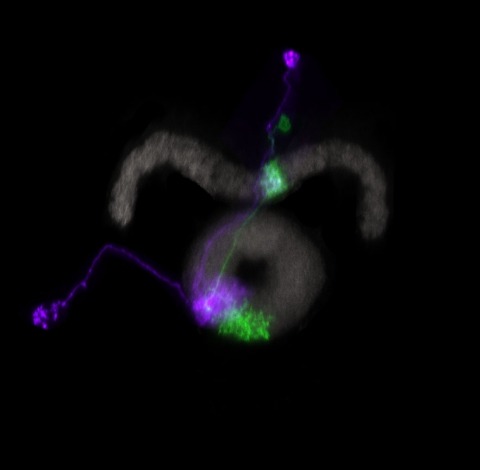

Many animals maintain an internal representation of their heading as they move through their surroundings. Such a compass representation was recently discovered in a neural population in the Drosophila melanogaster central complex, a brain region implicated in spatial navigation. Here, we use two-photon calcium imaging and electrophysiology in head-fixed walking flies to identify a different neural population that conjunctively encodes heading and angular velocity, and is excited selectively by turns in either the clockwise or counterclockwise direction. We show how these mirror-symmetric turn responses combine with the neurons' connectivity to the compass neurons to create an elegant mechanism for updating the fly's heading representation when the animal turns in darkness. This mechanism, which employs recurrent loops with an angular shift, bears a resemblance to those proposed in theoretical models for rodent head direction cells. Our results provide a striking example of structure matching function for a broadly relevant computation.

The field of connectomics has recently produced neuron wiring diagrams from relatively large brain regions from multiple animals. Most of these neural reconstructions were computed from isotropic (e.g., FIBSEM) or near isotropic (e.g., SBEM) data. In spite of the remarkable progress on algorithms in recent years, automatic dense reconstruction from anisotropic data remains a challenge for the connectomics community. One significant hurdle in the segmentation of anisotropic data is the difficulty in generating a suitable initial over-segmentation. In this study, we present a segmentation method for anisotropic EM data that agglomerates a 3D over-segmentation computed from the 3D affinity prediction. A 3D U-net is trained to predict 3D affinities by the MALIS approach. Experiments on multiple datasets demonstrates the strength and robustness of the proposed method for anisotropic EM segmentation.